Directories

¶

Directories

¶

| Path | Synopsis |

|---|---|

|

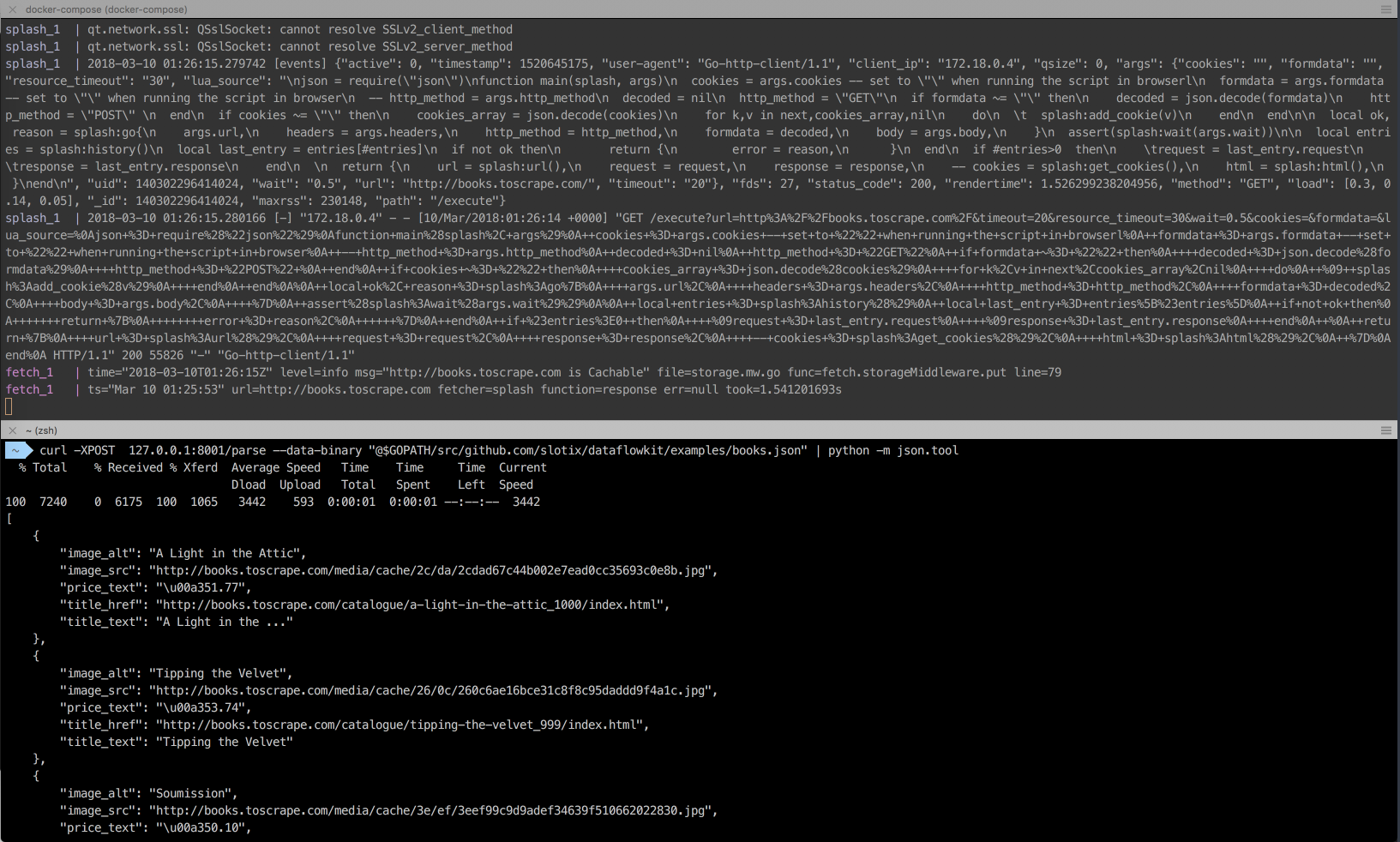

Package cmd of the Dataflow kit contains the following CLI daemons: - fetch.d service downloads html content from web pages to feed Dataflow kit scrapers.

|

Package cmd of the Dataflow kit contains the following CLI daemons: - fetch.d service downloads html content from web pages to feed Dataflow kit scrapers. |

|

fetch.cli

Fetcher CLI of the Dataflow kit downloads html content from web pages via Fetcher service endpoint.

|

Fetcher CLI of the Dataflow kit downloads html content from web pages via Fetcher service endpoint. |

|

fetch.d

Fetcher service of the Dataflow kit downloads html content from web pages to feed Dataflow kit scrapers.

|

Fetcher service of the Dataflow kit downloads html content from web pages to feed Dataflow kit scrapers. |

|

parse.d

Parse service of the Dataflow kit parses html content from web pages following the rules described in configuration JSON file.

|

Parse service of the Dataflow kit parses html content from web pages following the rules described in configuration JSON file. |

|

Package errs of the Dataflow kit lists specific error types like ParseError, BadPayload.

|

Package errs of the Dataflow kit lists specific error types like ParseError, BadPayload. |

|

Package fetch of the Dataflow kit is used by fetch.d service which downloads html content from web pages to feed Dataflow kit scrapers.

|

Package fetch of the Dataflow kit is used by fetch.d service which downloads html content from web pages to feed Dataflow kit scrapers. |

|

Package healthcheck of the Dataflow kit checks if specified services are alive.

|

Package healthcheck of the Dataflow kit checks if specified services are alive. |

|

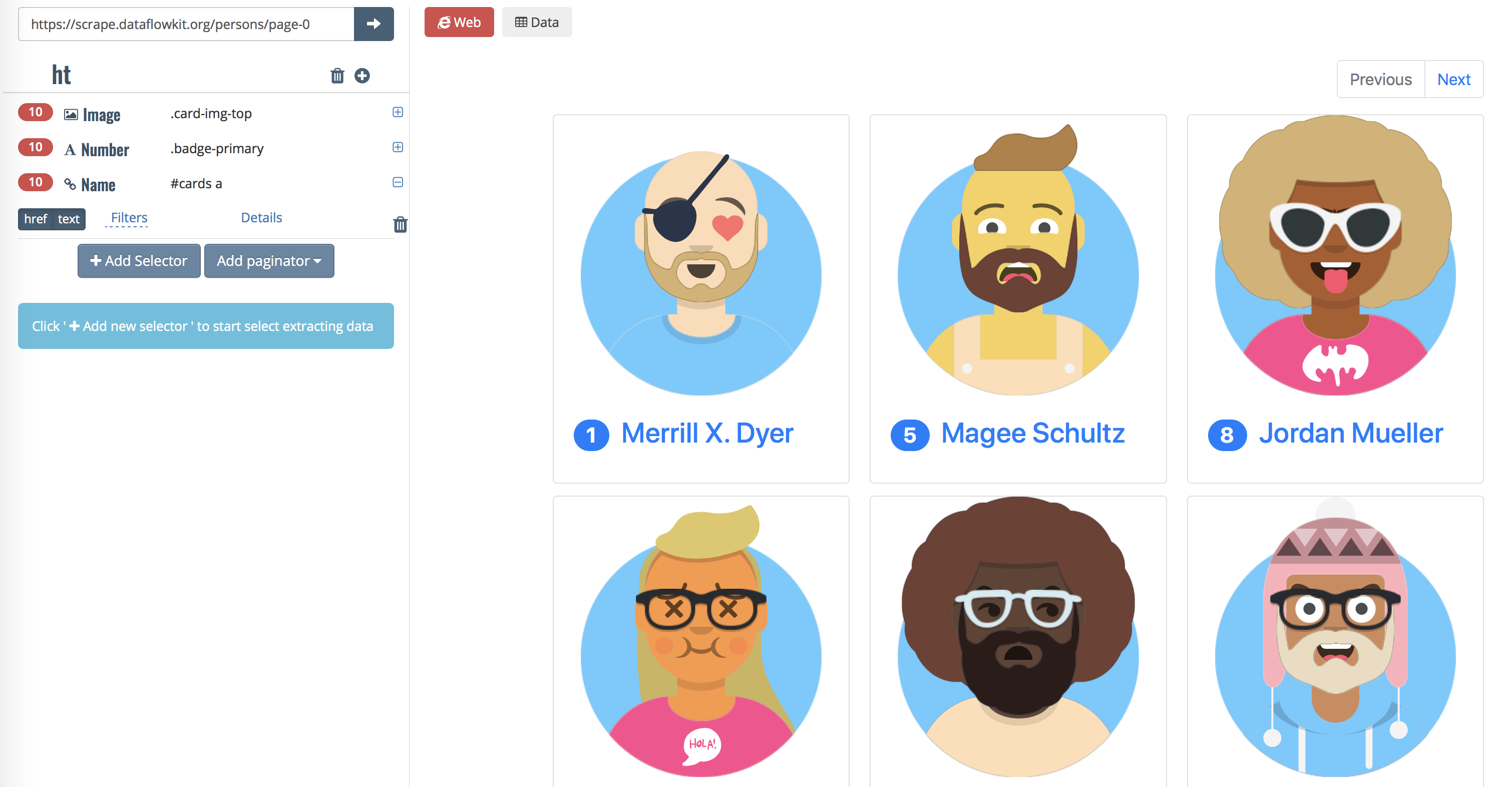

Package parse of the Dataflow kit is used by parse.d service which parses html content from web pages following the rules described in Payload JSON file.

|

Package parse of the Dataflow kit is used by parse.d service which parses html content from web pages following the rules described in Payload JSON file. |

|

Package scrape of the Dataflow kit is for structured data extraction from webpages starting from JSON payload processing to encoding scraped data to one of output formats like JSON, Excel, CSV, XML

|

Package scrape of the Dataflow kit is for structured data extraction from webpages starting from JSON payload processing to encoding scraped data to one of output formats like JSON, Excel, CSV, XML |

|

Package storage of the Dataflow kit describes Store interface for read/ write operations with downloaded data and parsed results.

|

Package storage of the Dataflow kit describes Store interface for read/ write operations with downloaded data and parsed results. |

|

Package utils of the Dataflow kit includes various functions and helpers to be used by other packages.

|

Package utils of the Dataflow kit includes various functions and helpers to be used by other packages. |

Click to show internal directories.

Click to hide internal directories.